Data Governance Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

1. Purpose

Textile Exchange (“Textile Exchange” or “we” or “us” or “our”) data are organizational assets critical to support our mission – to inspire and equip people to accelerate the adoption of preferred materials – and they must be accessible, well managed, and properly secured.

Vision

We envision an organizational data management system that provides secure, defined, quality data whenever and wherever needed in a cost-effective, reusable, and repeatable manner to ensure Textile Exchange remains the trusted authority of preferred materials in the textile industry.

Mission

Our data governance mission is to define and manage a quality data resource that enables Textile Exchange to inspire and equip people to accelerate the adoption of preferred materials in line with the Climate+ goals.

The Data Governance Policy establishes a framework to manage Textile Exchange data efficiently and effectively by:

- Establishing the principles and practices to manage and use data,

- Developing a data-conscious environment that provides secure, well-managed, and reliable data for decision-making, planning, reporting, and

- Articulating responsibilities for data and systems management.

2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to, in processing all Textile Exchange data.

The policy provides a framework to govern data and is supplemented by operational policies and procedures (i.e., standards, registers, guidelines, forms, and data agreements), which collectively operationalize data management in Textile Exchange.

Operational Policies

Data Compliance Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

A.1 Purpose

This policy establishes and standardizes Textile Exchange’s level of compliance with data laws, regulations, and best practices for the protection of organizational and personal data.

A.2 Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

A.3 Policy Statements

A3.1 Lawful data processing. All data processed by Textile Exchange shall be carried out in a lawful manner, in good faith, and shall only take place if there is sufficient legal basis for the processing activity.

A3.2 Personal data. Textile Exchange shall protect the rights and privacy of individuals and comply with applicable privacy and data protection laws and regulations.

A.4 Procedures

A4.1 Responsibility. The Data Governance Manager is responsible to support and monitor data compliance within Textile Exchange. It is the responsibility of each Business Lead to work with the Data Governance Manager and the Operational Compliance to ensure that that all data processing complies with all laws and contractual obligations.

A4.2 Data processing. All data processed by Textile Exchange shall be governed by an agreement that sets out the basis and protocol between the data provider and Textile Exchange. The agreement may take the form of a Terms of Use (TOU), Memorandum of Understanding (MOU), an independent agreement for data processing, or part of a larger agreement between the data provider and Textile Exchange. All agreements should conform to the Privacy Policy.

A4.3 Data processing agreement: Textile Exchange data processing agreements shall comply with General Data Protection Regulation (GDPR) and other data laws. The agreements should detail (a) roles and responsibilities, (b)scope of data sharing, (c) purpose and intended use of data, (d) period of agreement, (e) method and frequency of data sharing, (f) points of contacts, and (g) warranty and limited liability.

A4.4 Processing of personal data: The processing of personal data shall include (a) consent of the data owner, (b) the purpose of the data processing, (c) the scope of the personal data being processed, (d) how the personal data will be processed, and (e) how the personal data will be properly retained, protected, and disposed of.

A4.5 Sensitive personal data: Under no circumstances shall Textile Exchange process sensitive personal data such as racial/ethnic origin, commission/alleged commission of an offense, political opinions, religious or philosophical beliefs, trade union membership, genetic/biometric data, data concerning health, or data concerning a natural person’s sex life/sexual orientation.

A4.6 Request for Textile Exchange data: Request for data shall be assessed based on (a) data and the class of data requested, (b) requester profile, (c) intended use and disclosure, (d) value proposition and/or reciprocity, and (e) alignment to Textile Exchange Climate+ strategy. Confidential data and/or personal data shall not be shared without explicit consent from the data source.

A4.7 Non-disclosure agreement. Non-Disclosure Agreements should be used in all situations where controlled, internal and/or confidential information is being disclosed, either as an independent agreement or part of a larger agreement between the data requester and Textile Exchange.

A4.8 Approval process for sharing of Textile Exchange data. Sharing of Textile Exchange data shall take into consideration the sensitivity classification of the data and conform to the Data Sensitivity Classification Policy.

A4.9 Data sharing. Textile Exchange may share data via (a) open-source publication or repository, (b) access managed application or portal, (c) enterprise application interface, and (d) file transfer. Except for open-source publication or repository, all data sharing method requires a Data Sharing Agreement between Textile Exchange and the data requester.

A4.10 Data sharing agreement: Data sharing agreements should detail (a) scope of data sharing, (b) purpose and intended use of data, including the scope and conditions of data transformation, disclosure, claims, and security, (c) period of the agreement, (d) method and frequency of data sharing, (e) points of contacts, (f) resources and cost of data sharing, and (g) warranty and limited liability.

Data Sensitivity Classification Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

B.1 Purpose

This policy establishes a framework for classifying data based on its level of sensitivity, value, regulatory conformance, and criticality to Textile Exchange ¬– to set baseline security controls for the protection of data.

B.2 Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

B.3 Policy Statements

B3.1 Textile Exchange shall classify data according to its level of sensitivity and the impact on Textile Exchange should the data be disclosed, altered, or destroyed without authorization.

B3.2 Textile Exchange shall ensure data is protected according to the sensitivity classification assigned.

B3.3 Any data that may be identifiable to an individual or entity is considered confidential and shall require consent from the data owner before disclosure.

B4. Procedures

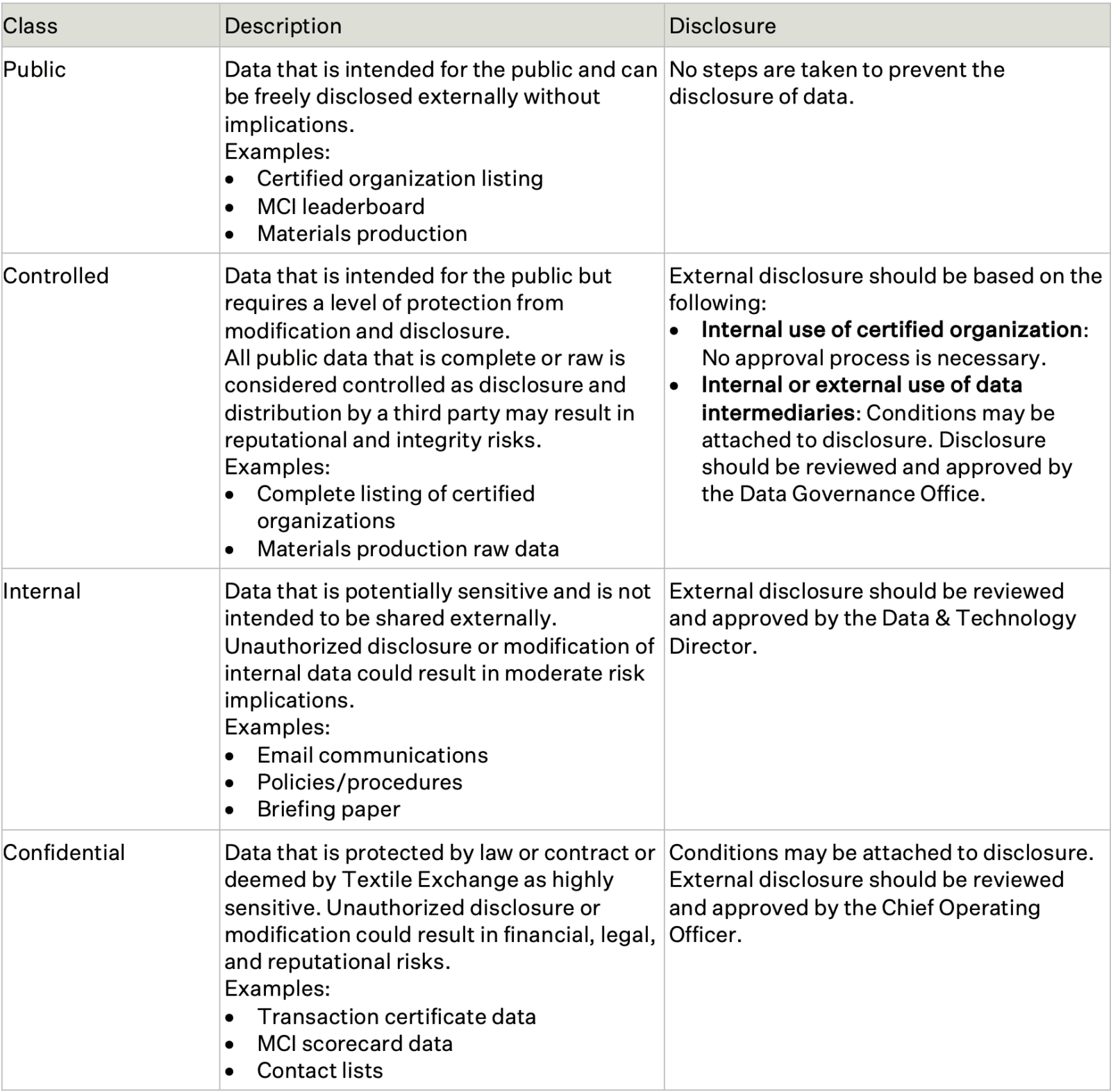

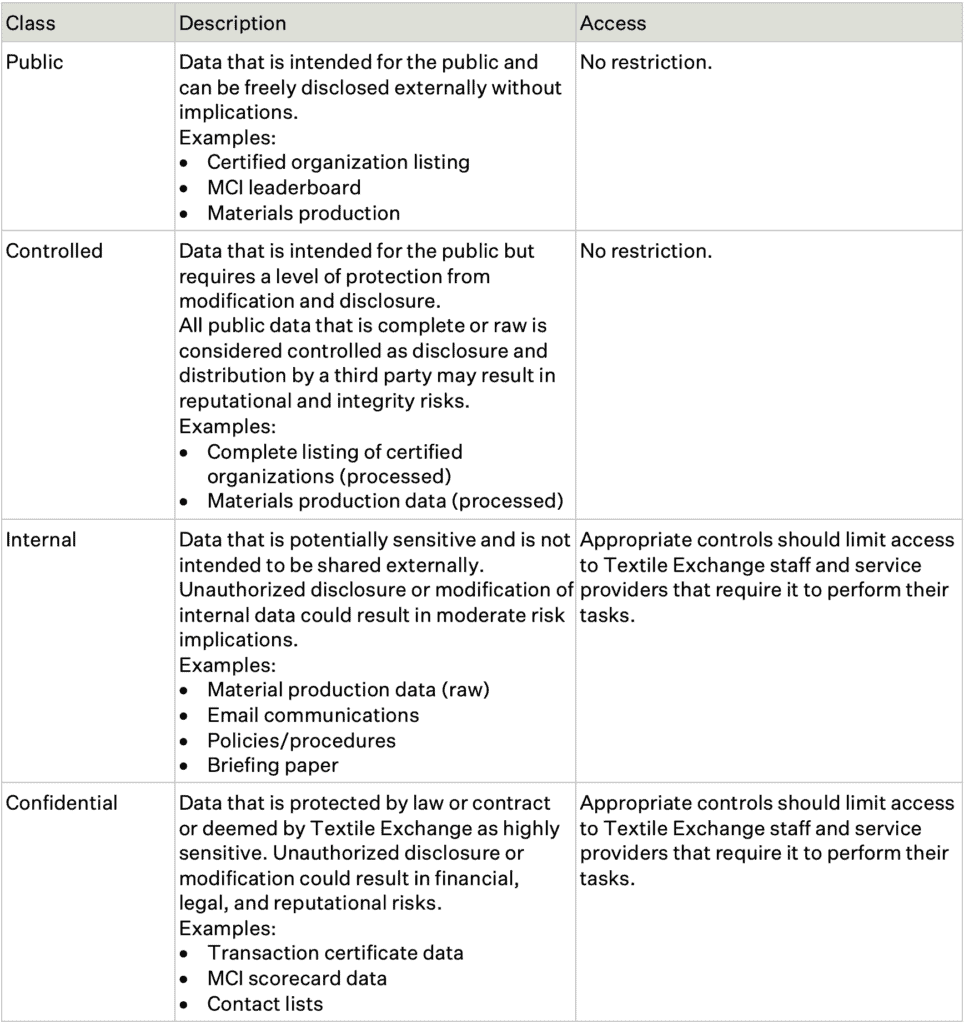

B4.1 Sensitivity classification. All Textile Exchange data should be classified into one of four sensitivity classes: public, controlled, internal and confidential.

B4.3 Data identifiable to an entity or individual. Any data that is identifiable to an entity or individual is naturally classified as confidential data. The disclosure of this data requires approval from the Chief Operating Officer.

Data Access Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

C1. Purpose

This policy ensures that there is a process in place to manage the access to Textile Exchange data and information systems appropriately and securely.

C2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

C3. Policy Statements

C3.1 Data should be classified according to an appropriate level of confidentiality, integrity, and availability (see Data Sensitivity Classification Policy) and in accordance with relevant laws, regulations, and contractual requirements (see Data Compliance Policy)

C3.2 Data should be made available to users who need it to carry out their duties and maximize the value of Textile Exchange, taking into account its sensitivity classification.

C3.3 Users with access privileges are responsible for safeguarding the secure access and ethical usage of that data.

C4. Procedures

C4.1 Access to data. Access to data should be granted based on the data sensitivity classification.

C4.2 Managing access. Data Custodians should grant users access based on the data sensitivity classification. Access to internal and confidential data requires authorization from the relevant Business Lead based on (a) legitimate business use, (b) area and level of responsibilities, and (c) training received.

C4.3 User responsibility. Once access is granted, users shall exercise care in using the data and systems to protect data from unauthorized use, disclosure, modification, and/or destruction. User irresponsibility may be subject to disciplinary measures.

C4.4 System administrator access. Users with administrative access to systems shall only use such access to carry out their responsibilities for Textile Exchange. All administrative access should be periodically reviewed and updated at the completion of a project or after any change in roles and responsibilities.

C4.5 Service providers access. Proposed service providers’ access shall be reviewed by Data Governance Director. Access privilege should be limited to the data necessary to fulfill the business purpose required of the service provider.

C4.6 Access review. All data access should be documented and reviewed periodically by Data Stewards to revalidate that access remains appropriate. Users’ access to data must be revoked promptly when it is no longer needed to carry out their responsibilities.

C4.7 Suspected non-compliance. All users must immediately report any suspected unauthorized access as security incidents to the Data Governance Manager, in accordance with the Data Security Incident Policy.

Data Quality Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

D1. Purpose

This policy describes the principles and procedures that Textile Exchange must comply with to manage and maintain high-quality data (accuracy, validity, reliability, timeliness, relevance, and completeness) to ensure that the data is fit for its intended use and purpose.

D2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

D3. Policy Statements

D3.1 Accuracy. Data should be sufficiently accurate for its intended purpose, represented clearly, in enough detail, and collected as close to the point of activity as possible. The costs and effort of collection should be balanced with the importance of the data, timeliness requirements, and the need for accuracy.

D3.2 Validity. Data should be processed in conformance with relevant requirements, rules, and definitions to ensure integrity and consistency.

D3.3 Reliability. Data processes should be clearly defined and stable to ensure the consistency and comparability of data over time. There should be confidence that the data reflect the actual event and improvements reflect real changes rather than variations in data collection approaches or methods.

D3.4 Timeliness. Data should be captured at or as quickly as possible after the event and must be available for the intended use within a reasonable time.

D3.5 Relevance. Data captured should be relevant to the purposes for which it is used. This entails periodic reviews of requirements to reflect changing needs. The amount of data collected should be proportionate to the value gained from it.

D3.6 Completeness. Data needs to be complete, representative, unbiased, and not contain redundant or duplicate records. Sufficient data should be collected and of a suitable quality, as it is needed to draw meaningful conclusions.

D4. Procedures

D4.1 Appropriate procedures. Textile Exchange should clearly define procedures to manage data change requests and reporting requests.

D4.2 Business units should submit data change requests and reporting requests using the Data Change Request Form.

D4.3 Change requests includes all new, addition or alteration to Textile Exchange data and/or systems.

D4.4 Appropriate data processes. Textile Exchange should clearly define and document data processes:

a. Data should be collected once and used for multiple purposes, wherever possible.

b. Data should be checked at the point or as close to the point collection as possible.

c. Where actual or complete data cannot be collected, appropriate sampling or appropriate proxies may be used, if they are clearly recorded in the methodology.

d. Data validation and triangulation should be established to ensure data quality.

e. Data assurance should be established to ensure data integrity.

f. Changes in approaches or methods should be clearly documented and communicated.

g. Missing, incomplete, or invalid data should be recorded and managed to provide an indication of data quality.

h. Where data compromises have been made (use of missing, incomplete, proxy, or invalid data), the resulting limitations of the data should be clear to users.

D4.5 Data conflicts. Conflicting perspectives, approaches, and methods between different stakeholders may occur at any point in the data lifecycle. All data-related issues should first be resolved between the relevant business units and the Data & Technology Platform and escalated to the Data Governance Committee should conflict persist.

D4.6 Data taxonomy should be clearly defined, managed, and communicated to all relevant stakeholders. Wherever possible, data taxonomy should be developed based on regulatory framework, industry-recognized framework, or globally accepted practices. Data taxonomy should also be regularly reviewed to maintain relevance.

D4.7 Master data should be consistently and uniformly managed to create a single master record of a party, product, place, or account in Textile Exchange. Master data should be deduplicated, reconciled, and enriched as a consistent, reliable source.

D4.8 Metadata should be inventoried for each data element in a data registry. The data registry should cover (a) the data element name, (b) the definition of the data, (c) the relationship to other data elements, (d) data format, (e) reference policies, (f) ownership, and (g) storage. It should be mapped and shared as Data Input Specification to set the ingestion expectations from data providers.

D4.9 Naming and numbering conventions should be clearly defined, managed, and communicated. Naming and numbering conventions should be easy to maintain, contain key characteristics, and facilitate the unique identification of the data element.

D4.10 Payload and periodicity should be clearly defined, managed, and communicated. Payload and periodicity from data providers to Textile Exchange should reflect the time sensitivity of the data set.

D4.11 Deduplication rules should be defined, established, and documented to maintain a unique instance of a data element. The deduplication process should establish clear matching scenarios as well as potential matching outcomes, such as consolidate (match and merge), deduplicate (remove redundant data), enhance (add information to data), and link (create a relation between data without merging).

D4.12 Data validation should be clearly defined, managed, and communicated. Data validation should include the business statement of the data quality rule, the data quality rule specification, logic/calculation if applied, acceptance criteria, reporting criteria, and enforcement criteria.

D4.13 Data assurance processes should be clearly defined, managed, and communicated. Data assurance requirements should deliver demonstrable evidence by requiring:

a. One or more additional data elements for triangulation, and/or

b. One or more additional supporting documents (e.g., pdf copy of scope certificates or laboratory reports) as evidence.

Data Provider Engagement Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

E1. Purpose

To establish a general framework for Textile Exchange to actively communicate and engage with its data providers to (a) align technical developments, (b) set expectations on the data provision, (c) ensure there is adequate resource to support the data provision, and (d) establish feedback process for continuous improvements.

E2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

E3. Policy Statements

E3.1 Engagement with data providers, particularly relating to contractual arrangements, should be restricted to authorized staff.

E3.2 A data processing agreement shall be in place before onboarding any data providers.

E3.2 Textile Exchange should properly introduce and orientate data providers to Textile Exchange’s systems and relevant policies.

E3.3 Textile Exchange should provide adequate guidance materials so that data providers can effectively and efficiently transfer data.

E3.4 Textile Exchange should provide adequate support to data providers on the use of data systems. Clear and effective communication channels should be set up and communicated to data providers.

E3.5 Textile Exchange should establish a clear process to decommission system connections and offboard data providers who are no longer legitimate data providers.

E4. Procedures

E4.1 Data provider status. Only data providers with legitimate status (e.g. certification bodies with accredited licensing status) shall be onboarded to Textile Exchange system.

E4.2 Contacts. Textile Exchange should reach out to data providers for a list of contacts for business, technical, and system error issues. Data provider contact list shall be used to communicate system updates and manage user access to guidance materials.

E4.3 Orientation. An orientation workshop should be provided to data providers outlining the onboarding steps and timeline expected from them. This should include:

Completion of data transfer specification,

- Review data input specification for data gaps,

- Establishing system connection, and

- Deadlines for transfer of test and production data.

E4.4 Resources. Textile Exchange should make available to data providers all updated guidance materials. This should include technical documentation, instruction videos, data input specification, etc.

E4.5 Communication. Data providers should be promptly informed of system changes via email. A copy of the email should be saved for record keeping and logged in a registry for audit trail.

E4.6 Data transfer expectations. Textile Exchange should make clear to data providers what is expected of them for the data transfer. This should include the scope and boundaries of:

- The specification of the data to be provided,

- The payload and periodicity for the data transfer, and

- The data validation and conformance will be carried out on the data received.

E4.7 Support. Textile Exchange should provide multiple support channels, including:

Regular office hours to address any ad-hoc project, technical, and policy-related queries. These sessions are intended to facilitate non-confidential open queries from data providers, and

One-to-one discussions on confidential matters.

E4.8 Feedback. Textile Exchange should encourage data providers to give project and/or technical feedback for continuous improvement.

Data Security Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

F1. Purpose

This policy provides a uniform set of guidelines on how data and systems should be used and maintained in Textile Exchange to protect from unauthorized disclosure, modification, use, or destruction and ensure that its confidentiality, integrity, and availability are not compromised.

F2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

F3. Policy Statements

F3.1 Data is an asset that has value to Textile Exchange and must be protected from unauthorized use and disclosure.

F3.2 Equipment and systems that gates the access to our data must similarly be protected from unauthorized use and disclosure.

F3.3 Data should be made available to those with a legitimate need for access to carry out their duties. Access to the system and data will be made based on access privilege and need to know in accordance with the Data Access Policy.

F3.4 All users are responsible for ensuring the secure and appropriate use of data.

F4. Procedures

F4.1 Data sensitivity classification. All Textile Exchange data shall be classified into one of four sensitivity classes: public, controlled, internal, and confidential. (See Data Sensitivity Classification Policy)

F4.2 User management. Data should be made available to users to carry out their duties in accordance with its classification level. (See Data Access Policy)

F4.3 All new staff are required to sign an agreement to protect Textile Exchange’s data confidentiality and follow Textile Exchange’s Data Security Policy, both during and after their employment with Textile Exchange.

F4.4 All new Textile Exchange staff are to receive mandatory data security awareness training as part of their induction.

F4.5 Any data security incidents resulting from non-compliance should result in appropriate disciplinary action.

F4.6 On termination of employment with Textile Exchange, the access privileges of departing staff to Textile Exchange information systems and data will be revoked. Emails and file spaces of the departing staff will be retained and archived.

F4.7 Access controls. Access to systems should be controlled. Wherever possible, individual access to systems should apply multifactor authentication, and entity access (i.e., API or SFTP) should apply code pairing authentication. All access control should be periodically reviewed and updated.

F4.8 User responsibilities. It is the responsibility of every user to safeguard the secure access and appropriate usage of Textile Exchange data and systems.

a. Users shall access Textile Exchange equipment and systems using a unique user identifier and an associated password. Wherever possible, multifactor authentication is applied.

b. Users shall use passwords generated by Textile Exchange’s designated password manager. All passwords should be managed in the same password manager. Textile Exchange admins will have access to these passwords.

2. Users should ensure that all equipment is suitably secured, especially when left unattended, to avoid the risk of accidents, interference, or misuse.

F4.9 All equipment should have time-out protection applied, which will automatically lock the equipment after a defined period of inactivity.

F4.10 All equipment shall have an up-to-date antivirus product installed.

F4.11 Users should never only store data on local and portable drives. Data should always be backed-up on Textile Exchange’s cloud storage.

F4.12 Users shall treat information received via email from unknown sources with care due to its inherent security risks.

F4.13 Any loss of equipment and possible disclosure of Textile Exchange data must be reported as a data security incident to the Data Governance Manager in accordance with the Data Security Incident Policy.

F4.14 Users must return or dispose of all Textile Exchange equipment and data in their possession upon termination of their employment.

F4.15 Textile Exchange reserves the right to retrieve, use and read any data composed, transmitted, or received through online connections or stored on Textile Exchange equipment.

F4.16 Third-party access. Textile Exchange uses service providers (e.g., contractors, suppliers, outsourcing, and development partners) to help develop and maintain its systems. It also allows connectivity to external organizations (e.g., partner organizations, certified organizations, members) to provide value. This access should be managed in the following manner:

a. All third parties to access Textile Exchange systems require a signed agreement in accordance with the Data Compliance Policy.

b. All third-party agreements that relate to data shall be reviewed and monitored by the Data & Technology Platform to ensure that data security requirements are satisfied.

F4.17 Risk assessment. Textile Exchange shall perform annual risk assessments to determine whether current security protocols are adequate. The scope of assessments can be either the whole organization, parts of the organization, a specific system, or a specific component of a system. The outcome of the assessments should lead to improvement measures that are reviewed and implemented by the Data & Technology Platform.

Data Security Incident Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

G1. Purpose

This policy establishes clear guidelines for the proper and effective handling of data security incidents in a manner that minimizes the adverse effect on Textile Exchange and the risk of data loss to the data providers and data owners.

G2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

G3. Policy Statements

G3.1 Data security incidents are accidental/deliberate event that results in or constitutes an imminent risk of the unauthorized access, loss, disclosure, modification, disruption, or destruction of Textile Exchange data, particularly personal data, and intellectual property. Examples of data security incidents include loss of device and equipment carrying data, sending data to the wrong recipient, sending wrong data to a recipient, hacking incidents or illegal access to databases or devices, theft of devices and equipment carrying data, scams that obtain data through deception, and errors or bugs in programming that is exploited to gain data.

G3.2 All users shall promptly report a data security incident.

G3.3 Textile Exchange shall ensure that data incidents are:

- Reported, documented, and managed in a timely manner.

- Handled by appropriate authorized staff.

- Assessed by appropriate authorities to determine a risk mitigating action.

- Informed to relevant bodies.

- Reviewed to identify improvements in policies and procedures.

G3.4 Textile Exchange shall appoint a Data Governance Manager to be responsible for the management of data security incidents.

G4. Procedures

G4.1 Identification and classification. Data security incidents should be reported promptly to the Data Governance Manager. The report should include full and accurate details of the incident/breach, including who is reporting the incident and the data class involved. (See Data Security Incident Form)

G4.2 Contamination and recovery. Once details of the incident are known, the Data Governance Manager should liaise with relevant staff to contain the effect of the breach. Priority actions may include:

- Shut down the compromised system or device.

- Prevent further unauthorized access to the system or device.

- Reset passwords or accounts.

- Establish steps taken to recover lost data and limit damages caused by the incident.

- Notify relevant authorities if criminal activity is suspected or confirmed.

- Cease practices that lead to the incident.

- Address gaps in processes that led to the incident.

G4.3 Risk assessment. Once the incident is contained, the Data Governance Manager and staff should assess the risks associated with the loss of data with the following considerations:

- How many individuals are affected?

- What category of individuals are affected (e.g., certification bodies, certified entities, members)?

- What types of data are involved (e.g., data program, data type, data class)? Is personal data involved?

- What caused the security incident (e.g., accidental, theft, hack, damage)?

- When and how did the security incident occur? Can it be repeated?

- Who might gain access to the compromised data?

- What is the impact of the security incident (e.g., reputation, financial, legal)?

- Who needs to be notified?

G4.4 Notification. Notifications should be sent to individuals and/or entities whose confidential data have been compromised, as well as relevant authorities when a data security incident is confirmed. Notifications should include:

How and when the breach occurred.

- What data is involved.

- Suggested protection measures, if applicable.

- Actions taken by Textile Exchange.

- Contact for further information and assistance.

G4.5 Evaluation and response. Once the data security incident is resolved, the Data Governance Manager should assess the cause of the incident and evaluate whether additional protection and prevention measures can be taken to prevent future happening. Evaluation and response are reported to the Data Governance Committee for review and oversight. Considerations should be given to the following:

- Was the breach a result of inadequate policies or procedures?

- Was the breach a result of inappropriate training?

- Are the levels of data access sufficient?

- Are system and device security adequate?

- Has this breach identified potential weaknesses in other areas?

Change Management Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

H1. Purpose

Uncontrolled changes to systems could potentially result in system disruption, data corruption/loss, or unmanaged costs. A formalized change management process is designed to ensure that changes are authorized, prioritized, and managed within an approved budget. This policy defines the formal requirements to manage changes to data and systems.

H2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

H3. Policy Statements

H3.1 Textile Exchange shall apply a formal approach to managing systems changes. Users should follow the procedures outlined in this policy for change requests on data and systems.

H3.2 Change requests includes all new, addition or alteration to Textile Exchange data and/or systems.

H3.3 Change requests shall be documented, assessed for their viability, value, and cost before being processed, approved by relevant authorities before development, and fully tested and fully documented.

H3.4 Business value, business risks, technical risks (including the potential impact on performance and security risks), as well as costs shall be formally considered as part of the formal assessment.

H4. Procedures

H4.1 Request for change. A change request can be initiated by any user for a new build as well as an upgrade or improvement to a system, new data requirements as well as a modification or extension of existing data requirements. All requests must be documented and shall:

- Include information on change requester, change resources, and change implementer.

- Include change details that sufficiently facilitates a clear assessment of requirement, resources, risk, and planning.

- Include change priority. Be communicated to appropriate stakeholders for consideration, assessment, and approval.

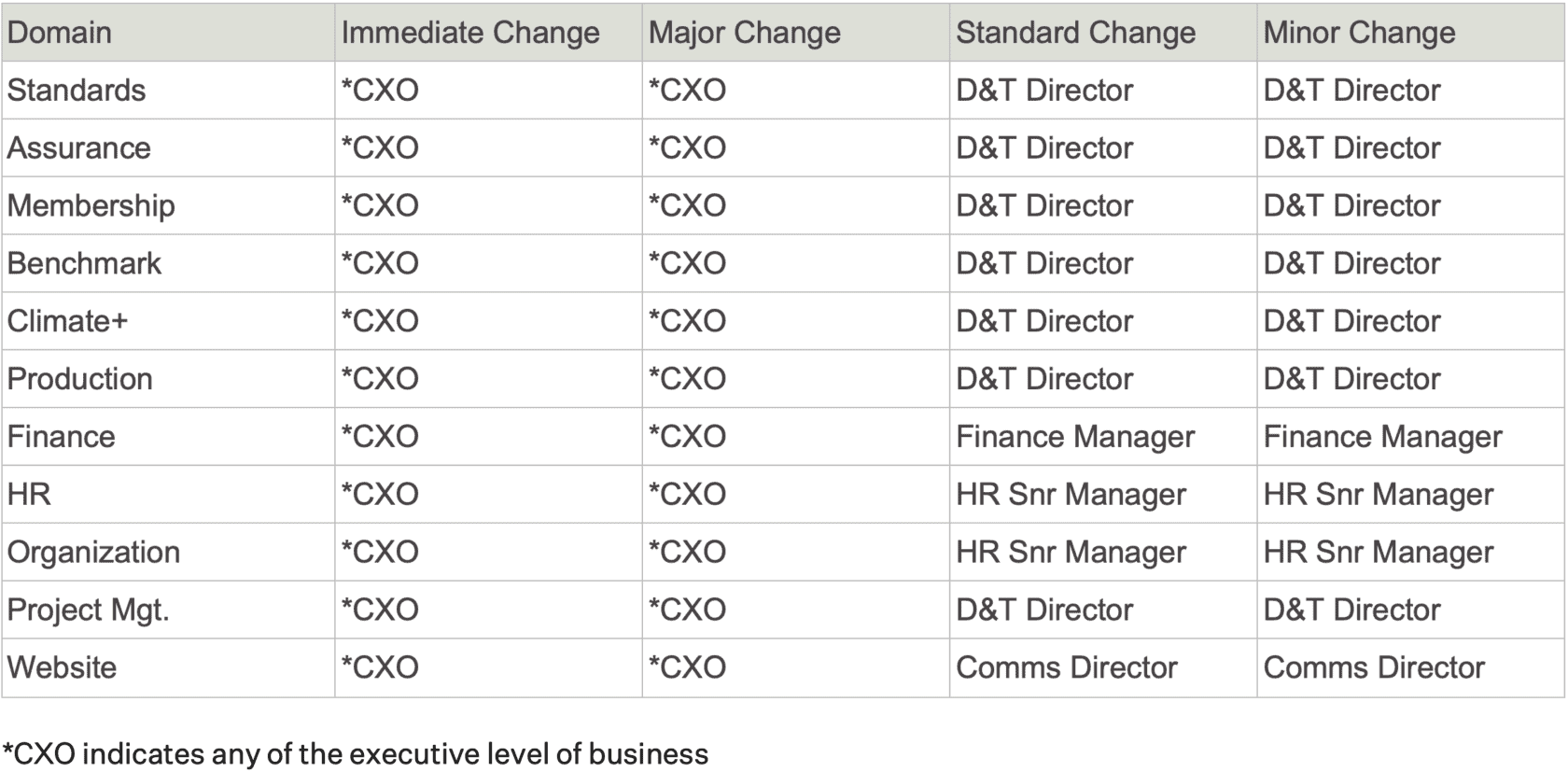

H4.2 Change priority. Change requests should be prioritized according to the following:

a. Immediate change. A time-sensitive change that requires immediate implementation to address an important issue that poses a high risk and/or impact on business operations.

b. Major change. A change that is relatively complex and poses a high risk and/or impacts on business operations.

c. Standard change. A change that is relatively complex but poses a low risk and/or limited impact on business operations.

d. Minor change. A change that is relatively complex but poses a low risk and/or limited impact on business operations.

H4.3 Review of change requests. Change requests should be reviewed by appropriate approval authorities.

H4.4 Testing. All changes should be tested, when possible, prior to implementation in the production environment. Where possible testing should be carried out using actual data. A test plan should be established with specific test scenarios, along with its desired outcome and acceptance criteria.

H4.5 Implementation. Changes shall only be released in the production environment when all test results have achieved the desired outcome and have been accepted by stakeholders.

H4.6 Post-implementation review should be performed for immediate and major changes.

H4.7 Change log and backlog. All change requests should be registered in a change log along with their change priority and estimated effort and cost. Any change that is not prioritized for immediate implementation may be recorded in the change backlog. The change backlog should be regularly reviewed.

Data Retention and Recovery Policy

Effective from: 1 June 2023, Last updated: 1 June 2023

I1. Purpose

This policy safeguards Textile Exchange against data loss and sets out the recovery process from a hardware failure, data corruption, or a security incident. A backup policy is related closely to a disaster recovery policy, but since it protects against events that are relatively unlikely to occur, in practice, it will be used more frequently as a contingency planning document.

The purpose of this policy is to provide a consistent framework to apply to the backup process.

I2. Scope

This policy applies to all staff, service providers, suppliers, and others (collectively, “users”) provided with access to Textile Exchange data and systems and must be adhered to in processing all Textile Exchange data.

I3. Policy Statements

I3.1 Textile Exchange shall define minimum controls required for the backup of its systems and data to meet organizational needs.

I3.2 Textile Exchange shall safeguard against the loss of data that may occur due to hardware or software failure, physical disaster, or human error.

I3.3 Textile Exchange shall take a risk-aware approach that identifies and addresses unacceptable risks while maintaining a knowledgeable and reasoned acceptance of other risks.

I4. Procedures

I4.1 Data to be backed up. Data that needs to be backed up should balance the criticality of the data itself against the burden of the backup process. The responsibilities of data backup are as follow:

I4.2 Data retention shall follow the following protocol.

- Personal data: So long as the user retains a 12-month continuous engagement with Textile Exchange.

- Financial data: 7 years.

- Certification data: Lifespan of Textile Exchange standards.

- Organization data: So long as it continues to serve the purpose it is intended.

I4.3 Backup frequency is critical to successful data recovery. Textile Exchange has determined the following minimum backup schedule for our organizational data to allow for sufficient data recovery while avoiding an undue burden on administrators.

- Incremental backup: Every day

- Full backup: At least once and incremental thereafter.

I4.4 Backup retention will be determined to sufficiently mitigate risk while preserving required data. To that end, the data retention policy is as follows.

- Incremental backups must at least be saved for two weeks.

- Full backups must at least be saved for one year.

I4.5 Restoration procedures must be tested at least annually and documented by the administrators. Documentation should include how restoration is performed, under what circumstances it is to be performed, and how long it should take from request to restoration.

Privacy Policy

3. Principles

The following principles set the expectation on how data should be managed in Textile Exchange. They guide the consistent development of data governance policies and procedures across the organization.

Textile Exchange recognizes that information is a corporate asset and must be identified, protected, used, and managed consistently throughout its life cycle, meeting required levels of information quality and in alignment with these data governance policies.

Adherence to this policy is mandatory across all Textile Exchange departments that process (create/acquire/collect, use, transform, store) critical data. The data governance framework as defined in this policy applies to all data across the organization.

Departments must monitor and manage the quality of critical data which they originate. Clear accountabilities for managing data must be formalized by departments establishing roles outlined in this policy. This ensures that critical data is managed in a consistent manner across the organization for effective governance.

Information assets must be managed in keeping with their risk and value. Textile Exchange must have the means to measure and ensure that data quality standards are in place for defined critical data, and commensurate with the acceptable risk for data management.

All processes and systems that handle critical data must have the means to ensure that data quality standards are met in the originating systems, as well as in the Enterprise Data Warehouse.

Common standards and definitions are essential for data harmonization. For all critical data, there must be a single, traceable authoritative source, clarity of ownership, and a documented data lineage. Where critical data is duplicated, Enterprise Data Warehouse sourcing principles will guide the selection of an authoritative source and placement of data.

3.1 Data is an organizational asset. All structured data and unstructured data are organizational assets. Secure and effective management of these assets is critical to our success.

3.2 Single Source of Truth. Textile Exchange processes data in different ways from many sources. Creating a single source of truth for data is essential for data integrity and maximizing the value of data.

3.3 Data process or processing must comply with the law. Textile Exchange will take all necessary steps to comply with relevant data-related rules, laws, and regulations, particularly the General Data Protection Regulation (GDPR).

3.4 Data is of high quality and usability. Textile Exchange delivers quality (accurate, complete, reliable, relevant, and timely) data that is easy for users to derive useful information to meet the organization’s strategic objectives.

3.5 Data is safe and secure. Textile Exchange will take all necessary steps to ensure that data is protected from unauthorized access, loss and that recovery measures are in place to manage data security incidents.

3.6 Data is used properly. Textile Exchange encourages the use of data for assessments and decision-making. The use of data (both internally and shared externally) must be legal, ethical, and appropriate.

4. Data Governance Framework

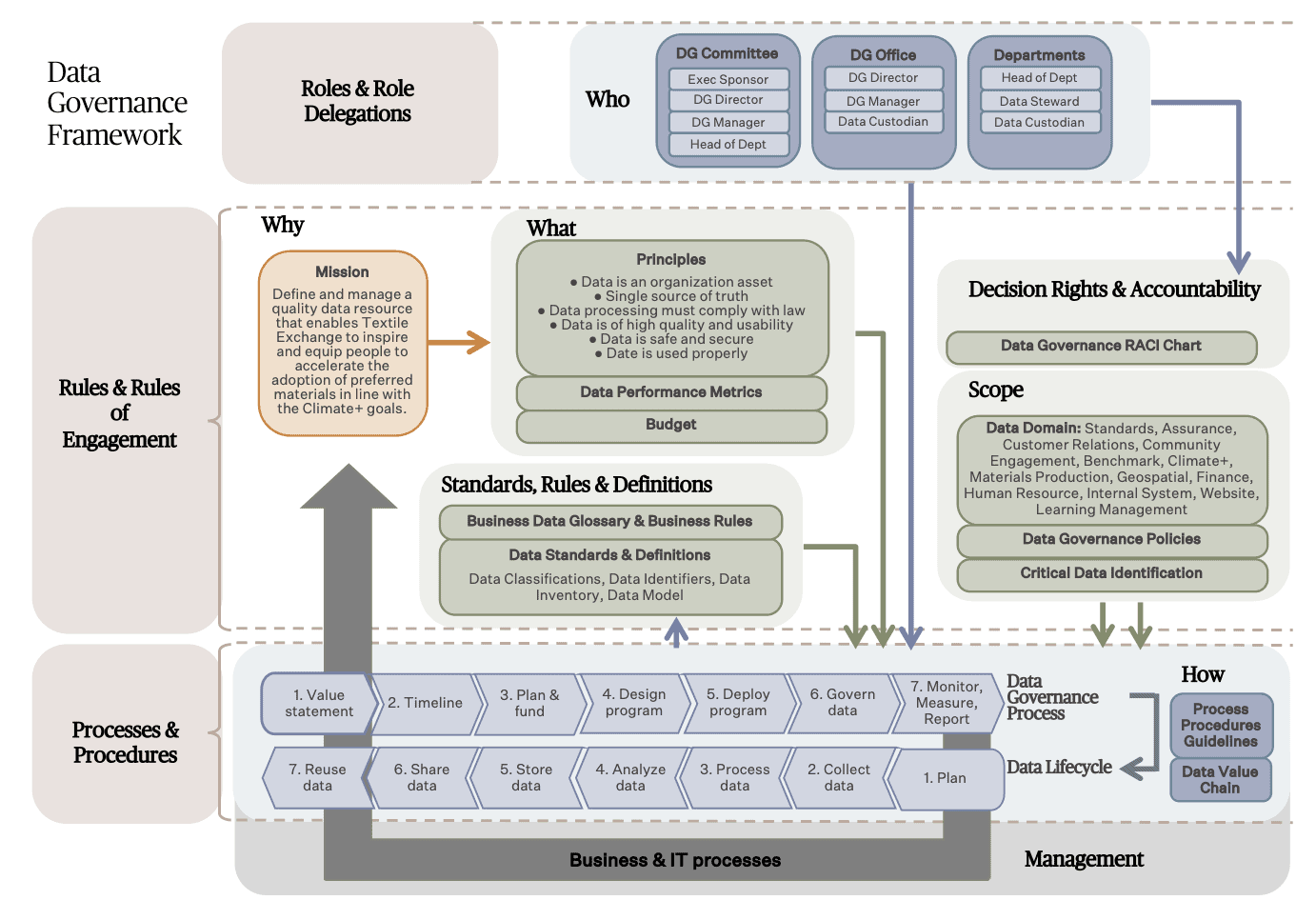

Textile Exchange’s data governance framework lays out the role delegations, rules of engagement, and normative resources (i.e. Data Governance RACI Chart, Data Governance Policies, Critical Data Identification, Data Performance Metrics, Business Data Glossary, Business Rules, Data Classifications, Data Identifiers, Data Inventory, Data Model, Data Value Chain, Data Processes/Procedures/Guidelines) required for the proper management of organizational data.

The following diagram outlines how the data governance framework shall be implemented in Textile Exchange.

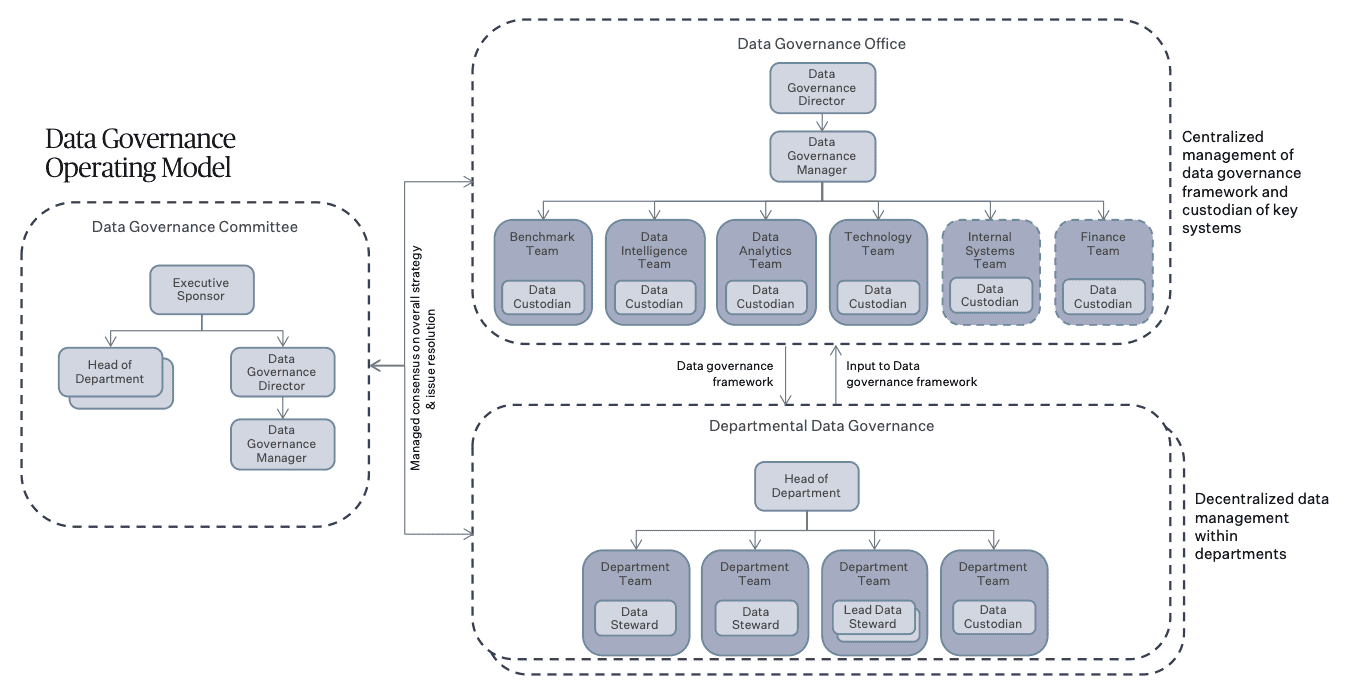

5. Data Governance Operating Model

Textile Exchange’s data governance operating model summarizes the roles of key data stakeholders within the organization. It is based on a hybrid approach that combines centralized governance with decentralized management of data.

5.1 The centralized component is a single Data Governance Committee and Data Governance Office that governs all critical data.

The committee, headed by the Executive Sponsor, comprises relevant Head of Departments from across the organization alongside the Data Governance Director and the Data Governance Manager.

The Data Governance Office is made up of the Data Governance Director, Data Governance Manager, and Data Custodians of key systems within the organization, including Benchmark, Data Intelligence, Data Analytics, Technology, Internal Systems, and Finance. The office has the primary responsibility for developing and gaining approvals for normative resources and implementing them across the organization. The office also manages communications, training, reporting, budgeting, and escalation of issues to ensure that data is managed consistently across the organization.

5.2 The decentralized aspect is that all functional data management activities remain within the reporting lines of each department. The data stewards who manage data, report to the Head of Departments while executing standards, policies, and processes defined by the Data Governance Office and approved by the central Data Governance Committee. In departments with more than one Data Steward for a data domain, a Lead Data Steward should be appointed.

6. Roles and Responsibilities

The data governance roles defined below speak only to the responsibility of a position pertaining to data governance and do not correlate to the actual functional and hierarchical organizational structure in Textile Exchange. Depending on resource availability, one role may be assigned to multiple persons or multiple roles may be assigned to one person. Additionally, a role that rightly falls under the purview of an individual may be delegated to another.

6.1 Data Governance Executive Sponsor provides strategic directives and oversight on the overall development and performance of the data governance framework. The Executive Sponsor is the final authority on data governance. Responsibilities include:

- Provides strategic vision and executive direction for data governance policies based on the corporate strategy.

- Champions data governance across the organization.

- Updates relevant regulatory authorities on the progress of data governance policy implementation, where necessary.

- Arbitrates data-related issues as part of the escalation.

- Final authority on exceptions to this policy, data governance framework, and escalation.

- Appoints and chairs the Data Governance Committee.

- Updates the Governance Board on data governance implementation and performance status.

- Secures budget and resources to implement the data governance framework.

- Establishes necessary communities for data domains and data stakeholders.

6.2 Data Governance Committee is chaired by the Executive Sponsor and comprises the relevant Head of Departments alongside the Data Governance Director and the Data Governance Manager. It reviews, approves, and resolves data governance policies as well as normative resources to consistently manage data across the organization. Responsibilities include:

- Ensures that data governance supports the organizational strategy.

- Drives data governance with all internal and external stakeholders.

- Reviews and approves data governance policies.

- Reviews the digital strategy, roadmap, and development status.

- Reviews the continuous improvement of the data governance framework.

- Shares departmental data governance best practices.

- Reviews the alignment and effectiveness of the domain across departments.

- Coordinates data governance efforts across departments.

- Reviews data governance exceptions and remediation.

- Resolves escalated data issues.

6.3 Data Governance Director leads the overall development, implementation, and update of the data governance. Responsibilities include:

- Defines the organizational data and digital strategy, including data architecture, governance, management, and analytics.

- Drives the implementation of data governance framework across the organization.

- Ensures clear guidance on data-related roles and responsibilities.

- Leads the development and implementation of data governance policies.

- Oversees the development, standardization, and uniform application of data standards, rules, and definitions.

- Oversees the development, standardization, and uniform application of data processes, procedures, and guidelines.

- Leads the development of data performance metrics.

- Leads the documentation and application of business rules.

- Ensures the data governance framework meets all regulatory requirements.

- Monitors the implementation of the data governance framework and reports to the Data Governance Committee.

- Reports data issues and associated risks to the Data Governance Committee.

- Manages the budget and resources for data governance.

- Sets priorities and makes decisions on key data governance issues.

- Resolve issues in all aspects of data governance.

6.4 Data Governance Manager manages the development, implementation, and update of the data governance framework. Responsibilities include:

- Supports the development of the data governance strategy and framework.

- Ensures the consistent implementation of data governance across the organization. Where necessary, resolve conflicts between departments.

- Provides guidance on data access management.

- Manages the development, standardization, and uniform application of data standards, rules, and definitions.

- Manages the development, standardization, and uniform application of data processes, procedures, and guidelines.

- Defines and monitors data performance metrics.

- Establish clear data quality measures for critical data

- Ensures that adequate controls for the quality, access, and usage of data.

- Manages data quality issues, develops remediation plans, and monitors the progress of remediation activities.

- Ensures that the lineage for critical data is in place.

- Escalates data issues and associated risks to the Data Governance Director.

- Monitors systems development and resulting the impact on critical data.

- Manages the development of data governance communication and training.

- Manages and reports on data security incidents.

- Supports the smooth operation of the Data Governance Committee.

6.5 Head of Department leads an operational unit accountable for the critical data of one or more data domains (e.g. Climate+, Standards & Assurance, Fibers & Materials, Benchmark, Membership, Impact Incentives.). The Head of Department is accountable for executing the data governance framework and all data management activities within the unit, including access management. Responsibilities include:

- Works with the Data Governance Office to develop a data governance strategy and workplan for the department.

- Ensures the data governance framework supports business objectives and priorities.

- Works with the Data Governance Office to develop data performance metrics, data standards, rules and definitions, and data processes procedures and guidelines.

- Actively participate in the Data Governance Committee.

- Shares data management best practice with other departments.

- Accountable for the data ownership of data domains.

- Accountable of the identification of critical data in data domains.

- Leads data governance implementation within department.

- Ensures data management within the department is carried out in accordance with the data governance framework.

- Ensures departmental staff are trained on the data governance framework.

- Addresses data issues within the department and with relevant stakeholders.

- Escalates data issues to the Data Governance Office.

6.6 Data Stewards manages an operational unit for the critical data of a data domain, where data originates or is first collected, regardless of format (e.g. Preferred Fiber & Materials Report, Climate+ Impact Modelling, Assurance etc.). The Data Steward is responsible for all data management and quality within the unit as well as the full lifecycle of the data. Responsibilities include:

- Executes data governance framework for the unit.

- Identifies and documents critical data for the data domain.

- Ensures critical data meets the data quality requirements within the unit.

- Monitors and reports on the data quality issues of critical data.

- Identifies data quality issues and escalate where necessary.

- Works with Data Governance Office on data quality issues remediation plan.

- Prioritizes, executes, and reports on data quality remediation.

- Assesses data risks within the unit, particularly in relation to data quality, and works with Data Governance Office to mitigate risks.

- Works with the Data Governance Office to define criteria for access management for the data domain.

- Works with the Data Governance Office to define business data glossary and business rules for the data domain.

- Ensures consistent understanding and application of business data glossary and rules within the unit.

- Works with the Data Governance Office to define data standards, rules, and definitions for the data domain.

- Executes data standards, rules, and definitions within the unit.

- Ensures that data standards, rules and definitions are aligned with business.

- Ensures consistent understanding and application of data standards, rules, and definitions within the unit.

- Works with the Data Governance Office to define data processes, procedures, and guidelines for the data domain.

- Executes data processes, procedures, and guidelines within the unit.

- Ensures the data processes, procedures and guidelines are aligned with business.

- Ensures that systems implementations timeline is aligned with business.

- Develops and executes test scenarios and acceptance for system implementation.

- Ensures changes within the unit that impacts data governance is timely communicated to the Data Governance Office.

- Ensures impact resulting from data governance implementation and remediation is timely communicated to affected stakeholders.

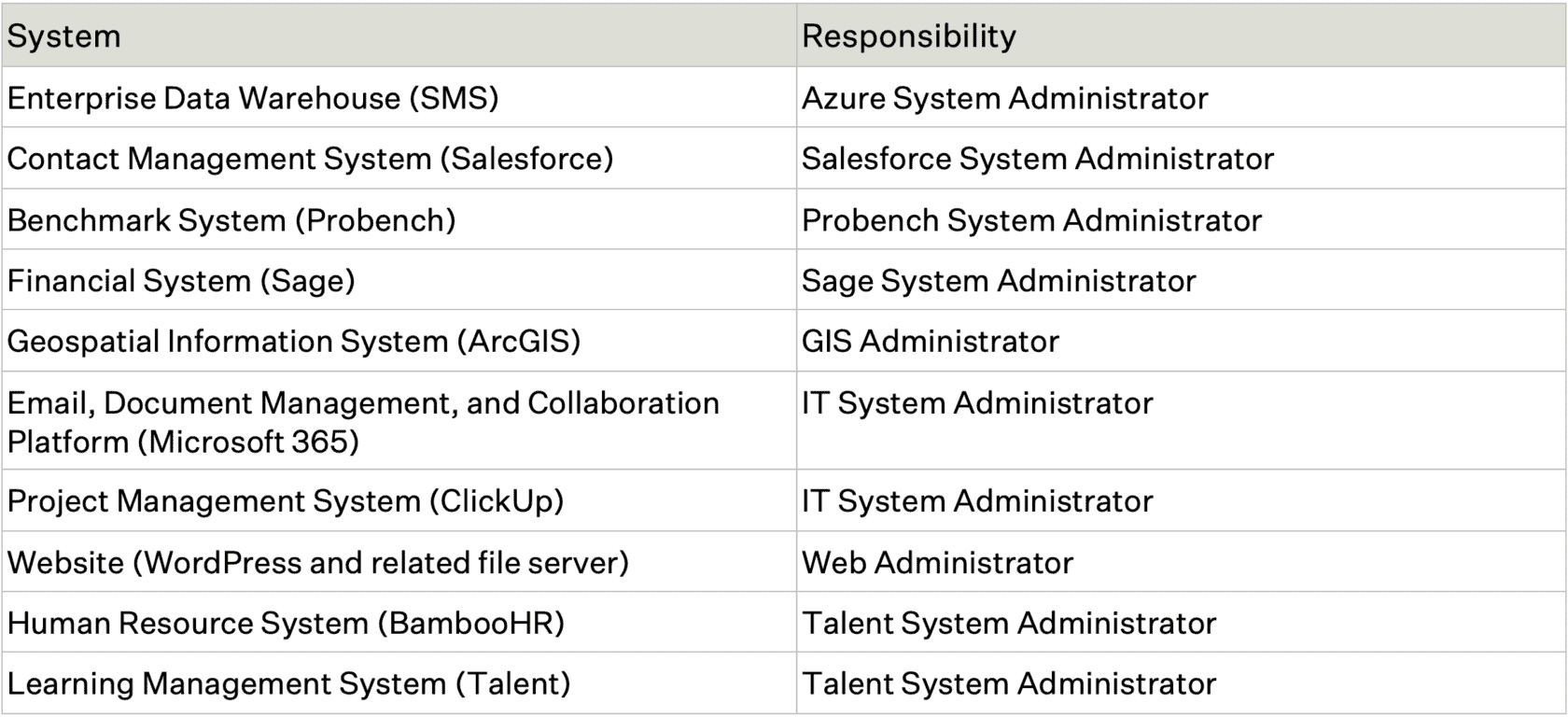

6.7 Data Custodian manages one or more systems (or technical environment) where data is stored. This can be a technology managed application, business managed system or manual system. He/she processes, stores, and/or maintains data in a system and/or technical environment for a data domain. Responsibilities include:

- Executes data governance framework for systems in custody.

- Manages one or more systems where data is stored.

- Ensures data best practices are applied in system design and development.

- Aligns systems with organizational technical architecture and data model.

- Manages access to systems in custody.

- Ensures security and quality controls for systems in custody.

- Manages change requests for systems.

- Supports departments the identification of critical data.

- Provides technical input to data quality issue remediation.

- Executes data quality remediation for systems in custody.

- Ensures documentation and timely update of data lineage.

- Supports departments in the development of business data glossary and rules.

- Develops and/or support the development of data standards, rules, and definitions for data domains in custody.

- Executes data standards, rules, and definitions for data domain in custody.

- Ensures the timely update of data standards, rules, and definitions.

- Develops or support the development of data processes, procedures, and guidelines for data domains in custody.

- Executes data processes, procedures, and guidelines for data domain in custody.

- Ensures the timely update of data processes, procedures, and guidelines.

- Develops and/or delivers data governance communication and training for the data domain or systems in custody.

- Ensures impact resulting from systems implementation and remediation is timely communicated to affected stakeholders.

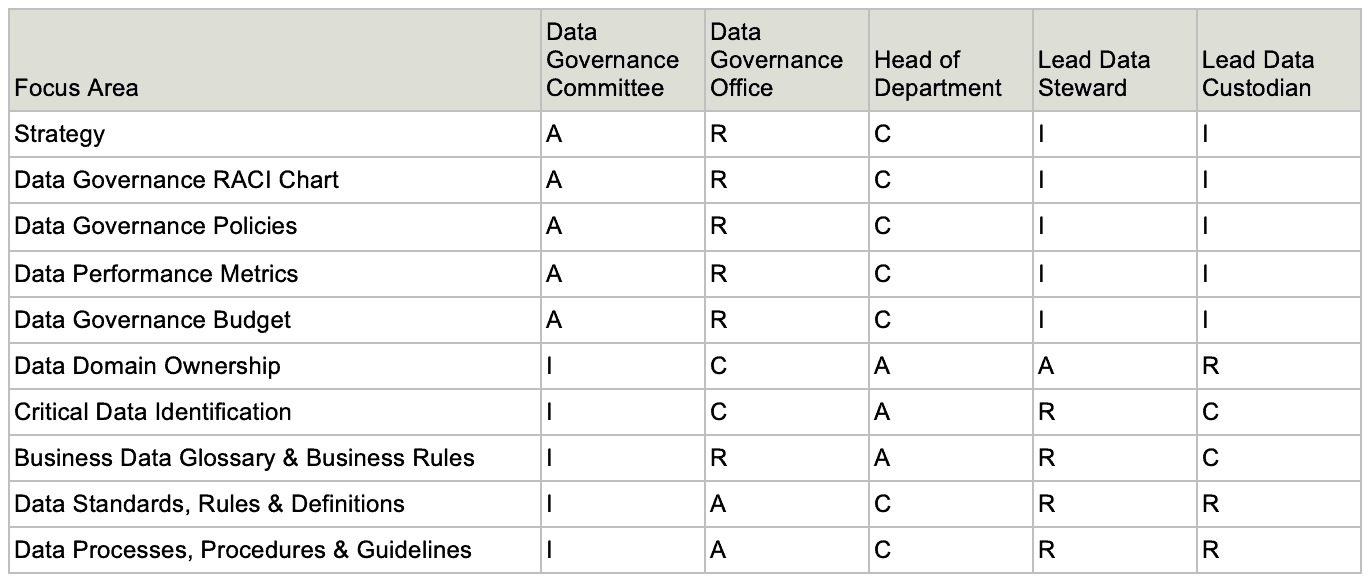

7. Responsible, Accountable, Consulted, Informed (RACI) Chart

- R – Responsible: Does the work to complete the task. A – Accountable: Delegates work and is the last one to review the deliverable before it’s deemed complete.

- A – Accountable: Delegates work and is the last one to review the deliverable before it’s deemed complete.

- C – Consulted: Provides input based on either how it will impact their future project work or their domain of expertise on the deliverable itself.

- I – Informed: Needs to be kept in the loop on project progress, rather than roped into details of every deliverable.

8. Critical Data Identification

Not all data in Textile Exchange pose the same degree of risk. This Policy assigns accountabilities for critical data, defined as data that is governed by or required for risk management, regulatory compliance, and financial reporting, or data that is essential for business growth or senior leadership decision-making (i.e., key business leadership forums).

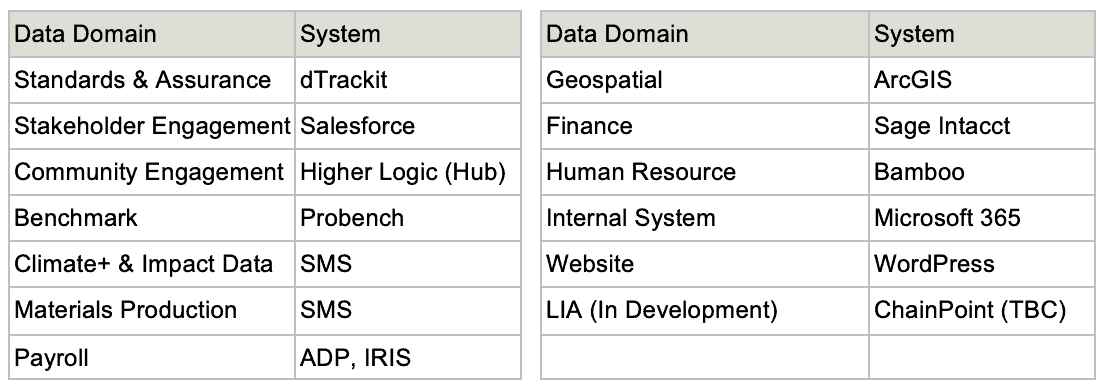

Data domains that hold critical data for Textile Exchange are identified below:

9. Data Value Chain & Processes

9.1 Data Value Chain

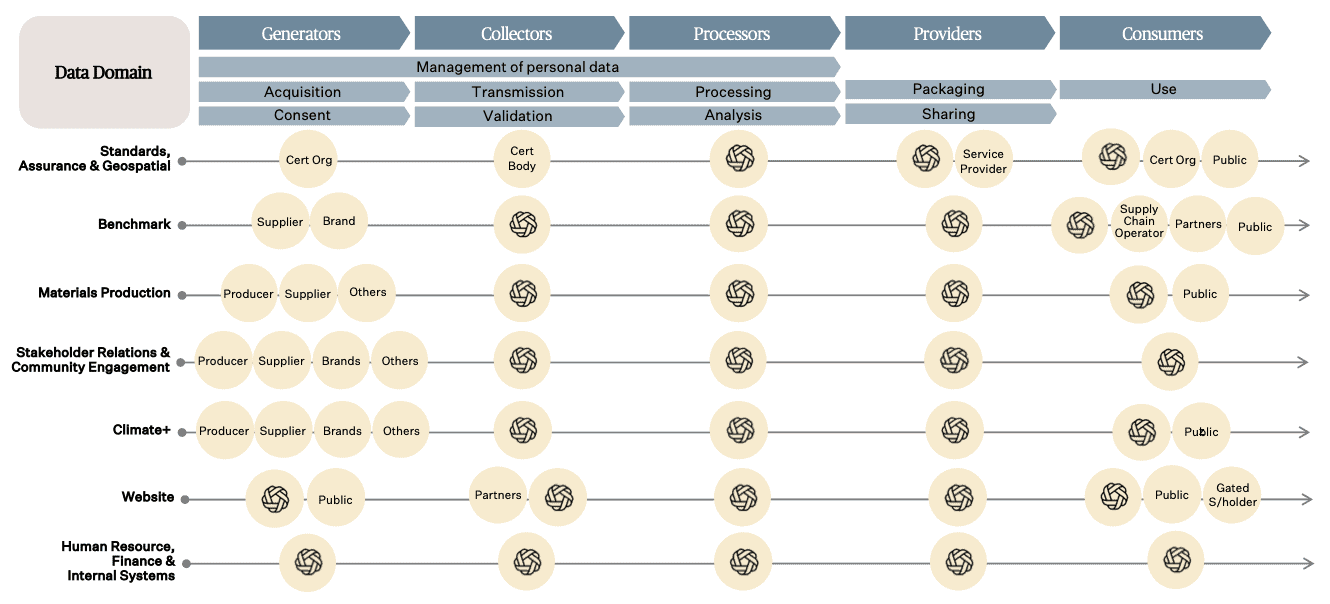

The following diagram outlines Textile Exchange’s role in the data value chains for various data domains.

9.2 Data Lifecycle

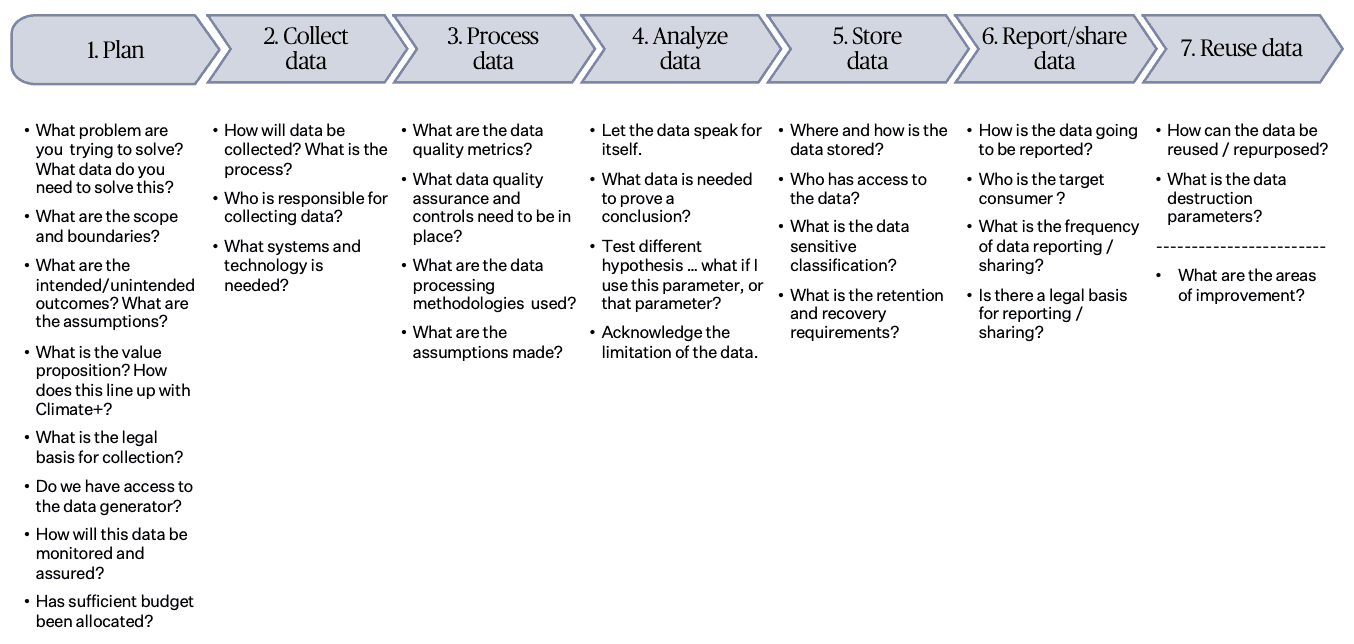

The following diagram outlines key considerations to be taken at each stage of the data lifecycle.

9.3 The role of assurance in data governance

Each department is responsible for defining monitoring and evaluation data for the program under its purview:

(a) Monitoring data means ongoing measurement of a set of indicators that are tracked regularly over time. The focus is generally on tracking the use of inputs, activities, outputs, and short-term outcomes of an intervention. (ISEAL)

(b) Evaluation data means the comparison (evaluation) of actual results and impacts obtained against plans or objectives. Evaluations may look at efficiency, effectiveness, medium-term outcomes, or impacts. Unlike monitoring, each evaluation may be a stand-alone activity, looking at a different set of evaluation questions. (ISEAL)

The Operational Compliance department plays a unique role in data governance in that it provides the program assurance and oversight by ensuring that (a) the program is implemented consistently, competently, and impartially, (b) the program risks are managed, (c) the assurance model is fit for purpose, and (d) the program assurance system is accessible and adds value to its stakeholders. To do so, Operational Compliance is responsible for legal compliance and defining assurance data required for a program.

(c) Assurance data means demonstrable evidence that specified requirements relating to a program, product, process, system, person, or body are fulfilled (Adapted from ISO 17000).

10. Communications and Training

10.1 Communications. This policy will be communicated to stakeholders publicly via the organization’s website textileexchange.org.

10.2 Training. Textile Exchange should foster a data culture in the organization.

(a) Data governance training should be provided as part of staff induction and continuous development.

(b) All data users should be trained in relevant data governance policies before access is given. This should include understanding the potential consequences of non-compliance.

11. Review Process

This policy will be reviewed and updated at least annually to keep pace with any data and system developments. In periods of rapid change, this policy may be modified and updated as needed to reflect current priorities.

12. Escalations and Exceptions

Data governance issues should first be escalated within a department. Unresolved issues may be escalated to the Data Governance Office, then the Data Governance Committee. The Executive Sponsor is the final authority over all data governance issues.

There are no exemptions from implementing and sustaining data governance across Textile Exchange. All exceptions to this policy should be documented and brought to the Data Governance Director for approval. He/she may delegate decisions for non-material exceptions to members of his/her team, provided all exceptions are tracked and monitored. Affected departments should be able to review exceptions and feedback on the decision process. Challenges to material exceptions may be referred to and resolved by the Data Governance Committee.

It is understood that certain circumstances may cause the need for additional time and funding to meet the requirements of this policy. The rationale for deferrals should be documented and approved. The rationale for deferral should be accompanied by a resolution to be actioned within a reasonable period, or this policy must be revised to permit the exception condition to exist.

13. Definitions

assurance data means demonstrable evidence that specified requirements relating to a program, product, process, system, person, or body are fulfilled (Adapted from ISO 17000).

critical data is data that is governed by or required for risk management, regulatory compliance, and financial reporting; or data that is essential to support business growth or senior leadership decision-making (i.e., key business leadership forums).

data means information, especially facts or numbers, examined, considered, and used in calculating, reasoning, discussion, planning, or decision-making. Data can be considered the building blocks of ‘information.’ ISO defines data as a “reinterpretable representation of information in a formalized manner suitable for communication, interpretation, or processing.” (Adapted from ISO/IEC 2382)

data access means the ability to access, change or delete data elements stored in a repository such as a database.

data culture means the collective behaviors and beliefs of people who value, practice, and encourage the use of data to improve decision-making.

data domain means a high-level categorization of business data defined for the purpose of assigning accountability and responsibility (e.g. Certification, Benchmark, Materials Production). Although data domains may often appear to map to Textile Exchange’s organizational structure, they need not do so. Data domains may comprise smaller sets of data known as sub-domains if the need arises (e.g. scope certification data, organic cotton production data).

data element means the fundamental data structure in a data processing system or any unit of data that has a precise meaning or precise semantics, such as certified organization name and land area.

business data glossary means the inventory of business terms, definitions, and taxonomy within a data domain.

data governance framework means a collection of policies, processes, and role delegations that ensures the integrity, security, and compliance in an organization’s enterprise data management. Synonymous: data governance program.

data governance means the overall management of the availability, usability, integrity, and security of the data employed in an organization. (ISEAL)

data governance program means a governing mechanism, which includes a defined set of procedures and a plan to execute those procedures. (Adapted from ISEAL)

data harmonization is the method of unifying disparate data fields, formats, dimensions, and columns into a composite dataset.

data identifier means a language-independent label, sign or token that uniquely identifies an object within an identification scheme.

data inventory means an inventory of all the data assets (source data along with its metadata) maintained by Textile Exchange as well as the information regarding its scope, source, provider, and storage location.

data lineage is the description of the movements and transformations of data from point of origin or derivation to consumption.

data process or processing means any operation or set of operations that are performed on data, whether or not by automated means, such as collection, recording, organization, structuring, storage, adaptation or alteration, retrieval, consultation, use, disclosure, dissemination, erasure or destruction.

data provider means the entity that is disclosing data for processing and/or uses by the data recipient.

data quality determines the level of confidence in Textile Exchange data and is defined in the context of seven data quality dimensions. It speaks to the state of completeness, validity, consistency, timeliness, and accuracy that makes data appropriate for a specific use. It is a perception or an assessment of the information’s fitness to serve its purpose in a given context. Data quality is affected by the way data is entered, stored, and managed. Data quality includes defined data quality threshold that determine whether data quality is within required tolerances.

data quality dimensions are based on best practices and include:

- data completeness is the extent to which required data within records is collected and provided. A data value is complete when the field storing it contains information.

- data validity indicates the degree to which data conforms to a specified range of values, a specific format or is found in a reference list.

- data precision refers to the exactness of data, e.g., the number of significant digits to which a numeric value is measured relative to its usage (100.00 is more precise than 100), or precision of a timestamp to include milliseconds.

- data uniqueness is the degree to which different records can be distinguished, typically requiring that a key datum uniquely identifies a record and exists only once in a given group of records.

- data integrity indicates the reliability of data across systems and flows. Integrity measures that data remains unchanged from source to destination; or if changed, that transformations have results consistent with defined expectations. Data Integrity also means that data values make sense in the context of other relevant data, e.g., account opening date cannot be before the first transaction date.

- data timeliness is the degree to which data is available at the time it needs to be utilized, typically measured as latency (e.g., the time between when information is generated and when it is available for use) or recency (e.g., having the most recent data for reporting).

- data accuracy is the degree to which data correctly reflects a real-world object, event or fact being described, e.g., our record for the headquarters address of a customer is where that customer is physically domiciled.

data quality rule means the logic, including a pass/ fail criterion, to analyze the quality of data, e.g., year must be 4 digits. A data element will be considered as passing the rule if the value is either “2022” or “2023.”

data quality standard means the objective and the overall scope of the data quality management framework defined with reference to specific data quality dimensions.

data quality threshold means the minimum acceptable pass rates for Data Quality Rules. E.g., 100% threshold means that there is no tolerance for errors.

data security incident means an accidental or deliberate event that results in or constitutes an imminent risk of the unauthorized access, loss, disclosure, modification, disruption, or destruction of Textile Exchange data, particularly personal data.

data taxonomy means a hierarchical structure separating data into specific classes based on common characteristics. The taxonomy represents a convenient way to classify data to prove it is unique and without redundancy. This includes both primary and generated data elements.

data user means any authorized entity or individual that has been granted access rights to Textile Exchange data and/or systems to perform an agreed set of activities.

disclosure means the voluntary reporting or sharing of data publicly or to a specific third party.

evaluation data means the comparison (evaluation) of actual results and impacts obtained against plans or objectives. Evaluations may look at efficiency, effectiveness, medium-term outcomes, or impacts. Unlike monitoring, each evaluation may be a stand-alone activity, looking at a different set of evaluation questions. (ISEAL)

full backup means backup of the entire database system (including transaction log).

identifiable data means any data that can be used to distinguish or trace an individual or entity’s identity and any information that is linked or linkable to an individual or entity.

incremental backup means backup of only the changes that have been made since the last incremental backup.

information means knowledge concerning objects such as facts, events, things, processes, ideas, or concepts that, within a certain context, have a particular meaning (adapted from ISO/IEC 2382)

metadata is essentially data about data. It is used to describe characteristics such as content, quality, format, location, and contact information of physical or digital data. Metadata ensures data can be discoverable, citable, reusable, and accessible in the long term.

monitoring data means ongoing measurement of a set of indicators that are tracked regularly over time. The focus is generally on tracking the use of inputs, activities, outputs, and short-term outcomes of an intervention. (ISEAL)

payload means the carrying capacity of a packet or other transmission data unit.

periodicity means the frequency of data transfer by the data provider to Textile Exchange.

personal data means data in any format that relates to an identified or identifiable living person. An identifiable living person is someone who can be identified directly or indirectly from an identifier such as a name, an identification number, location data, an online identifier, or one or more factors specific to the physical, physiological, genetic, mental, economic, cultural, or social identity of that person.

project means a temporary endeavor undertaken to create a unique project service or result. (PMBOK® Guide)

program means a group of related projects managed in a coordinated way to obtain benefits and control not available from managing them individually. Programs may contain elements of work outside of the scope of the discrete projects in the program. (PMBOK® Guide)

raw data means the original data (record), which can be described as the first capture of information, whether recorded on paper or electronically. Synonymous: Source data.

record information created, received and maintained as evidence and as an asset by an organization or person, in pursuit of legal obligations or in the transaction of business. (Adapted from ISO 15489-1:2001)

retention means the agreed length of time an organization will keep different types of records. Retention schedules are policy documents that support compliance with legislative and regulatory requirements.

sensitive personal data means personal data on racial/ethnic origin, commission/ alleged commission of an offense, political opinions, religious or philosophical beliefs, trade union membership, genetic/biometric data, data concerning health, or data concerning a natural person’s sex life/sexual orientation.

service provider means any individual or entity that is contracted to process its data or to develop, maintain or update its systems.

Single source of truth (SSOT) is the practice of aggregating the data from many systems within an organization to a single location. A SSOT is not a system, tool or strategy, but rather a state of being for an organization’s data in that it can be found via a single reference point.

system means any information or data system designed to collect, process, store, and distribute data, such as technology platform, database, data warehouse, websites, applications, computer hardware, and computer equipment.